Haste makes Waste - When Garbage Collection Throws Sui's Mysticeti-FPC Safety Out The Window

Haste makes Waste - When Garbage Collection Throws Sui's Mysticeti-FPC Safety Out The Window

The previous posts in our consensus series covered the fundamentals of consensus algorithms and highlighted the fragility of theoretical liveness guarantees in partially synchronous systems. In this post, we'll go a step further, diving deeper and examining how safety guarantees can also be broken too.

If you haven't read the previous posts yet, we highly recommend giving them a quick look to familiarize yourself with consensus algorithms, as this post builds directly on the concepts introduced earlier.

Friendly reminder:

- Safety ensures all honest validators agree on the ledger start

- Liveness ensures honest validators keep updating the ledger state

Read more here 👇

Breaking the Ordering Shackles

To start, let’s take a step back and reconsider the design choices—and inherent limitations—of the consensus algorithms we’ve discussed so far.

One of the main challenges faced by consensus algorithms is latency. Beyond the baseline communication overhead between validators, which has largely been optimized over time, another significant source of delay is that transaction execution typically only occurs after consensus has been reached. This increases the time between transaction submission and the availability of execution results.

Various techniques have been proposed to mitigate this issue. A popular approach is optimistic execution, where validators speculatively execute transactions from blocks that are likely to be finalized, before consensus is fully reached. If the eventual consensus decision diverges, the speculative results are discarded.

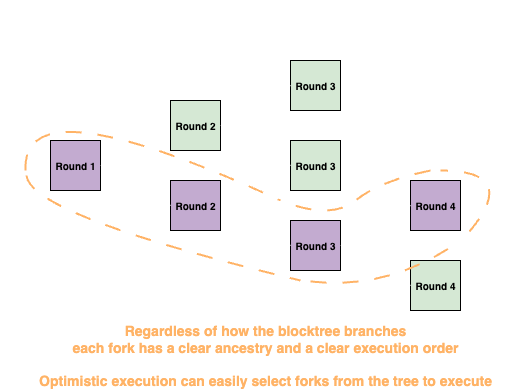

For chain-based consensus protocols, selecting blocks for optimistic execution is relatively straightforward due to their linear block ancestry.

For DAG-based ones, on the other hand, selection becomes a nightmare due to the lack of clear block ordering before commit.

The algorithm we're going to discuss in this blog is a clever heuristic devised to assist Sui's Mysticeti in choosing transactions for optimistic execution

Recall that safety comprises of two ingredients: agreement and total order. Agreement is non-negotiable. If an action is executed by only a subset of validators, the resulting state will almost certainly diverge from that of validators that did not execute it.

The same, however, cannot be said for total order.

To better understand this, let's look at an analogy. In the physical world, numerous financial transactions happen all the time, and a lot of them are carried out in parallel. For example, Alice can buy a croissant at the bakery, while Bob is getting his coffee at another cafe down the street, all at the same time! Why is this possible? The idea is pretty simple: both Alice and Bob are spending their own money (think in physical dollar bills), and the order in which the two transactions happens wouldn't affect the final outcome.

By generalizing this concept, it turns into a powerful primitive. If ownership of assets (or in more general terms—objects) are well defined, and only the owner of an object can perform actions upon it, then transactions involving disjoint sets of owned objects no longer have to be ordered!

This idea is explored in several research papers, from the earlier Fastpay to later ones such as Sui-Lutris and Mysticeti-FPC. Unsurprisingly, given Sui research team's involvement in these academic works, the Sui blockchain is also designed around this primitive. By following an object-centric design and incorporating the idea of ownership, it becomes possible to take advantage of relaxed ordering requirements for higher throughput and shorter latencies.

Mysticeti-FPC

With a high-level understanding of the goal, time to shift our attention to the star of the show, Mysticeti-FPC.

Mysticeti-FPC is built on Sui's Mysticeti-C. Most rules and DAG structures from Mysticeti-C carry over, with additional mechanisms layered on top to create an optimistic execution path for owned objects.

To fully appreciate these mechnanisms, it is crucial to have a basic understanding of how Sui objects work. Broadly speaking, there are two types of objects:

- Owned : The object is governed by a private key, and only the owner of the key may sign and authorize actions on the object

- Shared : Any user can perform actions on these objects, as long as they satisfy the constraints defined by the associated on-chain logic (smart contract).

Given these designs, a few observations follow:

- Any transaction involving shared objects must still undergo total ordering, since they don't satisfy the ownership abstraction we just discussed

- Transactions involving only owned objects can largely be executed without any ordering decision from consensus, with the only exception being when the object sets involved are not disjoint

The crux of the problem then becomes: how can validators order transactions that touch the same owned object without going through the slower consensus procedure?

Mysticeti-FPC's answer is quite mesmerizing: it doesn't.

Instead of attempting to order transactions involving the same owned objects, validators instead focus on detecting and blocking any potential misordered transactions from executing. The intuition is that owned objects are governed by private keys, meaning the owners have full authority over them and should be able to sequence any actions correctly. In other words, if an owner attempts to perform multiple actions on the same object simultaneously, that behavior can itself be treated as malicious and blocked^2^.

By shifting the responsibility for ordering onto the user, validator algorithms can be significantly simplified while still benefiting from the ownership abstraction model. Of course, this approach doesn't come without complications, which we will explore later.

So far, the discussion has been somewhat abstract and unrigorous. Let's move on to a formal definition of the algorithm. As usual, we start with the terminology that will be needed throughout the blog.

Terminology

-

Self Ancestors(B) / Self Descendants(B): Self ancestors / descendants of a block

Brefer to the set of ancestors / descendants ofBthat are created byB.author -

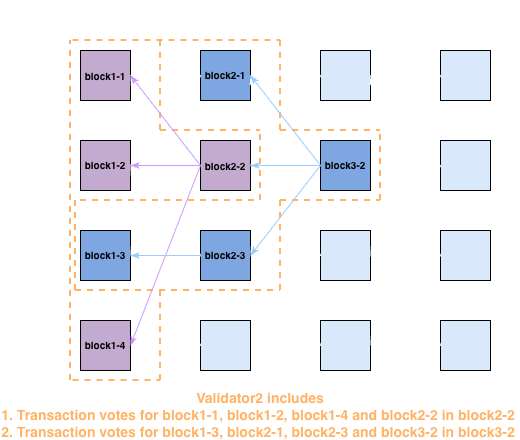

Transaction Vote: Validators cast invalid votes on

(block, transaction_within_block)pairs for transactions they consider invalid:: RUST1// This represents invalid votes to transactions in block 2struct PerBlockTransactionInvalidVotes { 3 block: Block, 4 invalids: Vec<Transaction>, 5} 6 7// Invalid votes for transactions in received blocks are 8// included into the immediate next block the validator produces 9struct Block { 10 ... 11 transaction_votes: Vec<PerBlockTransactionInvalidVotes>, 12}To remove the need of repeatedly embedding the same vote in multiple blocks, self-descendant blocks automatically inherit votes from self-ancestor blocks. For example, the diagram below shows validator2's vote for block1-1 casted by block2-2, and block3-2 inherit it instead of having to re-include the vote again

-

Owned object identifier: Owned object can be identified by the

(owned_object_id, version)tuple.owned_object_idstays constant throughout the lifetime of the owned object, whileversionadvances every time a transaction executes and updates the object. In essence, this means an owned object identifer should only ever be associated with one executed transaction. Once the transaction is executed, the version of the object advances, and the original identifier becomes deprecated -

Lock Table: Each validator maintains a local lock table mapping owned object identifier tuples to

Option<Transaction>, which represents the owned objects used by transactions it considers "valid" -

Conflicting Transactions: Transactions are said to be conflicting if they touch the same owned object identifier

-

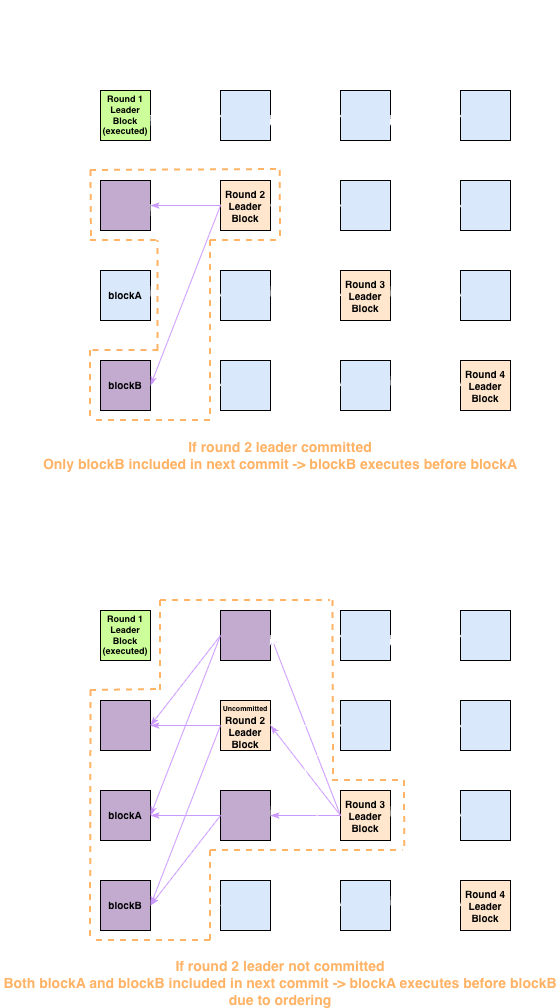

Transaction Finalization: After a leader block is Mysticeti-C committed, each transaction must be individually checked for acceptance or rejection. Upon decision for all transactions within a commit subDAG, the subDAG becomes ready for transaction finalization, and will be transaction finalized once all preceding committed subDAGs are also finalized. Here is a rough description of the acceptance and rejection rules:

- Transactions can only be accepted if they gather a supermajority of implicit valid votes within a bounded round (precise definitions will be discussed in the algorithm section)

- Otherwise transactions are rejected

-

Optimistic Execution: A transaction may be chosen for execution before it is finalized. The execution result will be cached until it is either

- committed once the transaction is finalized and accepted

- discarded if the transaction is finalized and rejected

Algorithm

The core mechanisms of Mysticeti-FPC revolve around three topics:

- How validators decide whether a transaction is valid or not (i.e. whether an invalid vote should be cast)

- How validators choose transactions for optimistic execution

- How transaction acceptance and rejection are decided once a subDAG is committed

Let's start with the easier part—how validators vote.

Whenever a new block is included into the local DAG, a validator iterates through all transactions and attempts to acquire a lock for each used owned object identifier in the lock table. If all owned object identifiers involved in a transaction are lockable (i.e. no other locks already exist for the objects), then the transaction is considered valid. Otherwise, it means the user must have sent multiple conflicting transactions, and the validator observed another one first. In this case, the validator settles on an invalid vote instead.

After the votes have been created, they are collected and included in the next produced block for casting and propagation.

Notably, any block must either directly contain or implicitly inherit votes for all transactions within its causal history. This is important for upholding the vote inheritance assumptions required for transaction finalization later.

:: PYTHON1def transaction_lockable(self, transaction): 2 for obj in transaction.owned_objects: 3 # if the object is already locked by a different transaction, the user 4 # must have "misbehaved" 5 if self.lock_table[obj.identifier] is not None and 6 self.lock_table[obj.identifier] != transaction: 7 return False 8 return True 9 10def acquire_locks(self, transaction): 11 for obj in transaction.owned_objects: 12 # lock objects to allow detection of misbehaviors in later transactions 13 self.lock_table[obj.identifier] = transaction 14 15def get_self_vote_for_block(self, block): 16 vote = PerBlockTransactionInvalidVotes{ 17 block: block, 18 invalids: [], 19 } 20 for transaction in block.transactions: 21 if self.transaction_lockable(transaction): 22 self.acquire_lock(transaction) 23 else: 24 vote.invalids.append(transaction) 25 self.self_block_votes[block] = vote 26 27def receive_block(self, block): 28 ... 29 self.get_self_vote_for_block(block) 30 ... 31 32def propose_new_block(self, current_round): 33 ... 34 parents = self.select_parents(current_round) 35 votes = [] 36 for parent in parents: 37 # Block must include votes for all transactions in causal history that 38 # haven't been voted on yet by any self-anc.estors 39 for ancestor in self.store.causal_history(parent): 40 if self.already_casted_vote[ancestor]: 41 continue 42 self.already_casted_vote[ancestor] = True 43 votes.append(self.self_block_votes[ancestor]) 44 ... 45 return self.new_block(current_round, parents, votes, ...)

This pretty much sums up the vote casting part. Next comes handling votes in received blocks.

Whenever a validator includes a new block into its local DAG, it also does a quick (but slightly imprecise) tally of invalid vote within the block to assist decision on whether a transaction is suitable for optimistic execution.

The selection rules can be summarized as:

- If a transaction uses any shared objects, it is inappropriate for optimistic execution

- If a transaction has gathered a supermajority of invalid votes, it is inappropriate for optimistic execution

- If a transaction has gathered a supermajority of implicit valid votes (voting power of validators that have created descendants of the block - voting power of invalid votes), it is ready to be scheduled for optimistic execution

Once all transactions in a block have their quick tallying results ready, the transactions suitable for optimistic execution will be pushed to the executor for scheduling.

:: PYTHON1def tally_votes(self, block): 2 def ensure_vote_cache_entry(block): 3 if block not in self.vote_cache: 4 self.vote_cache[block] = VotingResult{ 5 invalids: dict(), 6 voted: set(), 7 resolved: False, 8 } 9 10 # causal_history includes the block itself 11 for ancestor in self.store.causal_history(block): 12 ensure_vote_cache_entry(ancestor) 13 # voted track validators that have already seen a block. deduct 14 # invalid votes from it and we get the amount of voting power that 15 # considers a transaction valid 16 self.vote_cache[ancestor].voted.add(block.author) 17 18 # track the votes included in block 19 for per_block_transaction_invalid_votes in block.transaction_votes: 20 voted_block = per_block_transaction_invalid_votes.block 21 invalids = per_block_transaction_invalid_votes.invalids 22 ensure_vote_cache_entry(voted_block) 23 for transaction in invalids: 24 # invalids keeps track of the set of validators that casted 25 # invalid votes for each transaction 26 if transaction not in self.vote_cache[voted_block].invalids: 27 self.vote_cache[voted_block].invalids[transaction] = set() 28 self.vote_cache[voted_block].invalids[transaction].add(block.author) 29 30 # add the validator's own votes for block immediately instead of waiting 31 # for next proposal creation 32 for transaction in self.self_block_votes[block].invalids: 33 if transaction not in self.vote_cache[block].invalids: 34 self.vote_cache[block].invalids[transaction] = set() 35 self.vote_cache[block].invalids[transaction].add(block.author) 36 37def try_optimistic_execution(self, block): 38 for ancestor in self.store.causal_history(block): 39 vote_cache = self.vote_cache[ancestor] 40 # already resolved block, don't do repetitive work 41 if vote_cache.resolved: 42 continue 43 # a supermajority of validators must consider transaction valid to 44 # invoke optimistic execution 45 if not self.has_supermajority(vote_cache.voted): 46 continue 47 schedulable = [] 48 ready = True 49 for transaction in ancestor.transactions: 50 # transactions involving shared objects must wait for ordering 51 if self.uses_shared_objects(transaction): 52 continue 53 if transaction in vote_cache.invalids: 54 # a supermajority has deemed the transaction invalid, the 55 # transaction is guaranteed to be rejected by all validators 56 if self.has_supermajority(vote_cache.invalids[transaction]): 57 pass 58 # a supermajority implicit valid votes are seen. no other 59 # transaction using overlapping owned objects will get 60 # supermajority implicit valid votes. it's worth scheduling 61 # the transaction for optimistic execution 62 else if self.has_supermajority( 63 vote_cache.voted - vote_cache.invalids[transaction] 64 ): 65 schedulable.append(transaction) 66 # some transactions in the block haven't seen enough votes 67 # yet. wait for more evidence 68 else: 69 ready = False 70 break 71 else: 72 # a supermajority implicit valid votes are seen 73 schedulable.append(transaction) 74 if ready: 75 self.vote_cache[ancestor].resolved = True 76 # in rare cases the same transaction may be scheduled multiple 77 # times, the executor will handle it by deduplicating 78 self.schedule_for_execution(schedulable) 79 80def receive_block(self, block): 81 ... 82 self.get_self_vote_for_block(block) 83 self.tally_votes(block) 84 self.try_optimistic_execution(block) 85 ...

And finally, we reach the handling of transaction finalizations.

For this part of the algorithm, validators iterate through each queued commit and attempt to decide whether to accept or reject each transactions within its subDAG. Upon making decisions for all transactions within its subDAG, a commit becomes transaction finalized. Commits must be transaction finalized in the order they were committed, so later commits will only be processed once all earlier ones are fully resolved.

Broadly speaking, transaction finalization can be split into 3 main steps:

- Attempt to decide transaction acceptance based on cached votes in

vote_cache. This step (try_decide_with_cache) performs 2 checks for each transaction:- If a transaction has a supermajority of invalid votes in the

vote_cache, it will be rejected - If at the time of processing, the transaction doesn't have any invalid votes in the

vote_cache, it will be accpted

- If a transaction has a supermajority of invalid votes in the

- If the cached votes are insufficient to decide for a transaction, the validator will recount the valid votes to assess whether each transaction can be finalized (

try_accept_recount). The recounting here is to address the inaccuracy wherevote_cachemay underestimate the amount of implicit valid votes if adversaries equivocate. The logic works as follows:- Validators iterate the descendant blocks of the target block (block containing the voted transaction) that are part of any committed subDAGs

- Within the set of descendant blocks, any block with their self-ancestors also found in the set are filtered out. This filtering is based on the assumption that all blocks are assumed to include or inherit votes for their entire causal history, so it is both unncessary and inappropriate to look at a self-descendant for votes for transactions in a block's own causal history

- From the remaining set of descendant blocks without self-ancestors, the validator filters out all blocks that include an invalid vote for the transaction. The remaining blocks without an invalid vote are treated as including implicit valid votes, and the implicit valid votes are tallied

- If a supermajority has cast implicit valid votes, the transaction is accepted

- Finally, if any transaction goes for extended time without being finalized, it is considered stale and rejected (

try_reject_stale). Time is measured as the round distance between the most recently committed leader and the leader of the subDAG the transaction is committed in, and theSTALE_THRESHOLDis set to 3 rounds.

The pseudocode here is a simplified version of the original implementation and has some differences in edge case handling. The differences shouldn't matter for the purpose of security analysis, but we recommend interested readers to dive into the actual Sui production code for a more in-depth understanding.

:: PYTHON1STALE_DEPTH = 3 2 3# construct a descendant graph to make it easier for further operations 4def update_descendant_graph(self, latest_commit): 5 def ensure_descendant_graph_entry(block): 6 if block not in self.descendant_graph: 7 self.descendant_graph[block] = DescendantState{ 8 # children directly linked to the block 9 children: set(), 10 # descendants created by the same validator as block 11 self_descendants: set(), 12 } 13 14 for block in latest_commit.sequenced_blocks: 15 for parent in block.parents: 16 ensure_descendant_graph_entry(parent) 17 self.descendant_graph[parent].children.add(block) 18 19 for ancestor in self.store.causal_history(block): 20 if ancestor.author == block.author: 21 ensure_descendant_graph_entry(ancestor) 22 self.descendant_graph[ancestor].self_descendants.add(block) 23 24def update_pending_commit( 25 self, pending_commit, accepted_transactions, rejected_transactions 26): 27 remaining_blocks = set() 28 for block in pending_commit.pending_blocks: 29 # filter out decided transactions 30 block.pending_transactions -= accepted_transactions 31 block.pending_transactions -= rejected_transactions 32 if len(block.pending_transactions) > 0: 33 remaining_blocks.add(block) 34 pending_commit.pending_blocks = remaining_blocks 35 pending_commit.rejected_transactions += rejected_transactions 36 37def try_decide_with_cache(self, pending_commit): 38 accepted_transactions = set() 39 rejected_transactions = set() 40 for block in pending_commit.pending_blocks: 41 for transaction in block.pending_transactions: 42 # blocks within a committed subdag must be ancestor of a certificate 43 # pattern. if no invalid votes have been observed, all validators 44 # contributing to the certificate pattern have casted implicit valid 45 # votes. a supermajority of valid votes is sufficient to accept the 46 # transaction 47 if transaction not in self.vote_cache[block.block].invalids: 48 accepted_transactions.add(transaction) 49 # if a supermajority of invalid votes are seen, it is impossible for a 50 # supermajority of implicit valid votes to exist. validator can reject 51 # transaction 52 else if self.has_supermajority( 53 self.vote_cache[block.block].rejects[transaction] 54 ): 55 rejected_transactions.add(transaction) 56 # the validator hasn't gathered enough evidence to decide on whether 57 # to accept or reject transaction. keep transaction in pending list 58 else: 59 pass 60 self.update_pending_commit( 61 pending_commit, accepted_transactions, rejected_transactions 62 ) 63 64def try_accept_recount(self, pending_commit): 65 def ensure_valid_votes_entry(valid_votes, transaction): 66 if transaction not in valid_votes: 67 valid_votes[transaction] = set() 68 69 accepted_transactions = set() 70 valid_votes = set() 71 for block in pending_commit.pending_blocks: 72 to_traverse_blocks = min_heap() 73 to_traverse_blocks.insert((block.block.round, block.block)) 74 to_skip = set() 75 traversed = set() 76 while to_traverse_blocks.not_empty(): 77 (current_block_round, current_block) = to_traverse_block.pop_min() 78 if current_block in traversed: 79 continue 80 traversed.add(current_block) 81 if current_block not in to_skip: 82 # self-descendants implicitly inherit the vote of self-ancestors 83 # skip to prevent accidentally counting omitted invalid votes 84 # as implicit valid votes 85 to_skip += self.descendant_graph.self_descendants[current_block] 86 87 vote_for_block = PerBlockTransactionInvalidVotes{ 88 block: block.block, 89 invalids: [], 90 } 91 for vote in current_block.transaction_votes: 92 if vote.block == block.block: 93 vote_for_block = vote 94 break 95 96 for transaction in block.pending_transactions: 97 if transaction not in vote_for_block.invalids: 98 ensure_valid_vote_entry(valid_vote, transaction) 99 valid_votes[transaction].add(current_block.author) 100 101 for child in self.descendant_graph[current_block].children: 102 to_traverse_block.insert((child.round, child)) 103 104 for transaction in valid_votes: 105 if self.has_supermajority(valid_votes[transaction]): 106 accepted_transactions.add(transaction) 107 108 self.update_pending_commit(pending_commit, accepted_transactions, set()) 109 110def try_reject_stale(self, pending_commit): 111 # any transaction that still can't be finalized past the STALE_DEPTH will be 112 # immediately rejected 113 if pending_commit.commit.leader.round + STALE_DEPTH 114 <= self.latest_commit.leader.round: 115 rejected_transactions = set() 116 for block in pending_commit.pending_blocks: 117 rejected_transactions += block.pending_transactions 118 self.update_pending_commit(pending_commit, set(), rejected_transactions) 119 120def process_commit(self, latest_commit): 121 pending_commit = PendingCommit{ 122 commit: latest_commit, 123 rejected_transactions: set(), 124 pending_blocks: set([ 125 PendingBlock{ 126 block: block, 127 pending_transactions: set(block.transactions), 128 } for block in latest_commit.sequenced_blocks 129 ]), 130 } 131 self.pending_commits.append(pending_commit) 132 self.latest_commit = latest_commit 133 134 self.update_descendant_graph(latest_commit) 135 136 while len(self.pending_commits) != 0: 137 pending_commit = self.pending_commits[0] 138 self.try_decide_with_cache(pending_commit) 139 self.try_accept_recount(pending_commit) 140 self.try_reject_stale(pending_commit) 141 142 if len(pending_commit.pending_blocks) == 0: 143 144 # push the entire commit to execution along with list of rejected 145 # transactions to skip 146 self.transaction_finalize( 147 pending_commit.commit, pending_commit.rejected_transactions 148 ) 149 self.pending_commits = self.pending_commits[1:] 150 else: 151 # transaction finalization must be in order 152 break

Despite a few nitty-gritty details here and there, the entire algorithm should not be too difficult to follow. Spend some time here to absorb it. Once ready, we'll move on to the correctness proofs of the algorithm.

Proof of Safety

The safety of Mysticeti-FPC hinges on three properties. Let's prove them one by one.

Lemma 1: Optimistic execution results for accepted transactions must always match non-optimistic execution results

Since Sui's transactions index input objects based on the owned object identifier, which uniquely identifies an immutable snapshot of an object (think of this as a UTXO. Any mutations of objects will not modify the original snapshot, but will instead be stored under a new version, hence a new owned object identifier). Transaction execution results depend only on:

- The deterministic state transition logic defined by Sui's execution layer

- The contents of input objects

Both must be consistent across optimistic and non-optimistic executions. Thus the results are guaranteed to be identical.

Lemma 2: Across all honest validators, no two conflicting transactions can both be accepted

This invariant is easily provable. A transaction is only accepted if at least one of the two relaxed conditions is met:

- It hasn't received any invalid votes upon being committed as part of a subDAG

- It has a supermajority of implicit valid votes

Upon further inspection, the first condition is a special case of the second one. At the time a block (and consequently, the transactions it includes) is committed as part of a subDAG, there must exist a certificate pattern for the leader block of the committed subDAG. Since any block included in the subDAG must be an ancestor of the commit leader block, it must also be an ancestor of all blocks forming the certificate pattern. In other words, a supermajority of validators must have already cast votes for the block and the transactions within it. Absence of invalid votes at this point directly implies a supermajority of validators must have already implicitly cast valid votes for the transaction. This collapses the first condition into the second, when a supermajority of validators implicitly cast valid votes for a transaction.

The second condition can be proven by our old friend—quorum intersection. Given that honest validators only allow one transaction to be associated with each owned object identifier, within a set of conflicting transactions, any honest validator will cast an implicit valid vote for at most one of the conflicting transactions. By the quorum intersection property, it then becomes evident that no two conflicting transactions can both gather a supermajority of implicit valid votes. Thus one transaction gathering a supermajority of implicit valid votes immediately indicates no other conflicting transaction may do so, guaranteeing rejection of all other conflicting transactions.

This proves our second property to be true.

Lemma 3: Acceptance and rejection decisions for all transactions must be agreed upon by all honest validators

To prove this property, first observe there are 3 ways validators can decide for a transaction:

- Decide with cache

- Accept through recounting implicit valid votes

- Reject through staleness

Decisions through the second and third options are based purely on information obtained from committed subDAGs, of which agreement is guaranteed by Mysticeti-C safety. Deterministic algorithms must return identical results when fed with identical inputs, thus any two honest validators that decide purely through these two options must satisfy agreement.

This leaves us with only the first option to analyze. In particular, it is necessary to discuss the 3 following scenarios:

- Any two honest validators that both decide with cache must make the same decision

- If any honest validator accepts through recounting implicit valid votes, it is impossible for other honest validators to reject with cache

- If any honest validator rejects through staleness, it is impossible for other honest validators to accept with cache

The first scenario can be proven by observing that the accept and reject cache decision rules are mutually exclusive. One requires a supermajority of implicit valid votes, and the other requires a supermajority of invalid votes. By the same quorum intersection rule, these two supermajority sets must overlap on at least one honest validator, and since honest validators will not cast multiple votes for a transaction, we have a contradiction.

Similarly, acceptance through recounting of votes in the second scenario asserts the existence of a supermajority of implicit valid votes, and also cannot coexist with a supermajority of reject votes necessary to reject with cache.

The final scenario requires a bit more introspection. By the Sui's Mysticeti-C commit rule, both direct and indirect decisions require a certificate pattern to exist, and the certificate pattern must be contained within the causal history of any future leaders where

Any block containing transactions accepted with cache must not have any invalid votes by the time its leader is committed. Combining this with the existence of a certificate guaranteed by Sui's Mysticeti-C, we conclude that a supermajority of blocks from descendant_graph.

Given that rejection due to staleness only happens after the first leader with descendant_graph guarantees the final recounting must succeed and accept the transaction. This proves that cache-accepted transactions will never end up being rejected due to staleness.

With these three properties, it can be shown that all honest validators must have agreement on the decision for each transaction. Combined with total ordering being trivially enforced by Mysticeti-C, safety of Mysticeti-FPC holds.

Proof of Liveness

Liveness can largely be extended from Sui's Mysticeti-C, except for some complications around the timing of handling undecided stale commits. However, since the focus of this post isn't on liveness and we've already discussed liveness extensively in a previous blog, we will omit this part of the discussion here.

Garbage Collection

So far, the design is reasonable. However, algorithms designed under idealistic models don't always translate directly to production-ready code.

Mysticeti-C Garbage Collection

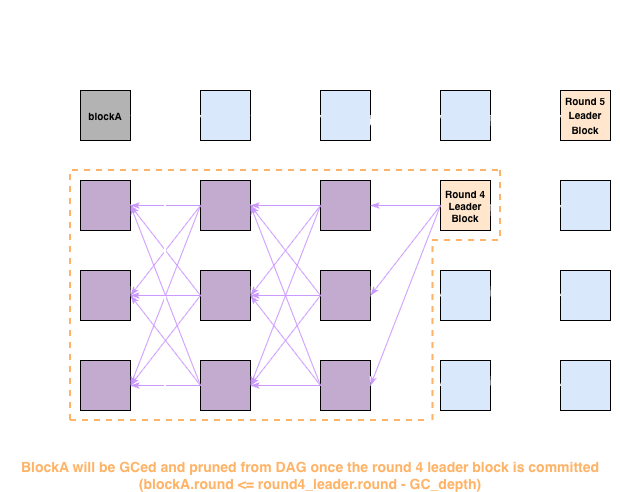

The algorithm presented above looks good on paper, but once it must be implemented, practical limitations arise. One major hurdle is the efficiency and memory usage concerns of storing and traversing a huge DAG in validator memory. To tackle this problem, Sui's Mysticeti-C employs a garbage collection mechanism that works as follows.

On each commit, the round of the leader block of the commit is taken, and all blocks with

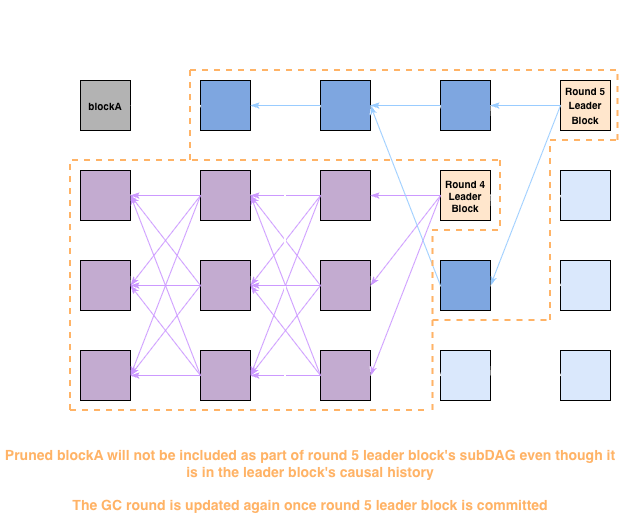

The visualization shows advancement of the GC round as leaders are committed:

-

As the Round 4 leader gets committed, the GC round will be updated. As a result, blockA will be pruned from the DAG since it hasn't been included in any committed subDAG yet

-

The pruned blockA will not be included in any future subDAG regardless of whether it is in the causal history of the committed leader

The garbage collection mechanism puts a practical limit on the amount of rounds validators have to keep in memory during synchronous network phases, alleviating the aforementioned memory and efficiency concerns. The downside of GC is that transactions within some honest validator-produced blocks may be dropped without being finalized, but this is not a major concern since all transactions can be resubmitted. Moreover, the production code uses

For Sui's Mysticeti-C, the correctness of GC trivially holds. An easy way to think about this: GC is a deterministic pruning threshold updated as part of each leader block commit. Since all validators agree on the order to commit leader blocks, the pruning threshold will also be updated consistently across all honest validators. As a result, honest validators will always agree upon the threshold to use when attempting to commit each leader block.

Mysticeti-FPC Garbage Collection

Mysticeti-FPC updates the garbage collection mechanisms since it changes how committed subDAGs are handled, as well as introduces additional caches for transaction votes. For the sake of clarity, we will refer to Mysticeti-C's logic of dropping blocks from the local DAG after commit as DAG-GC from now on.

The first observation is that the DAGs are mostly used to sequence blocks for commit, so DAG-GC can be updated once commit happens. In contrast, vote_cache and descendant_graph are used in transaction finalization, which may lag behind commits by a few rounds. This means those two data structures cannot be garbage collected using the same DAG-GC logic. Sui's approach is to introduce a separate Vote-GC procedure. The overall logic is the same—block entries in vote_cache and descendant_graph where

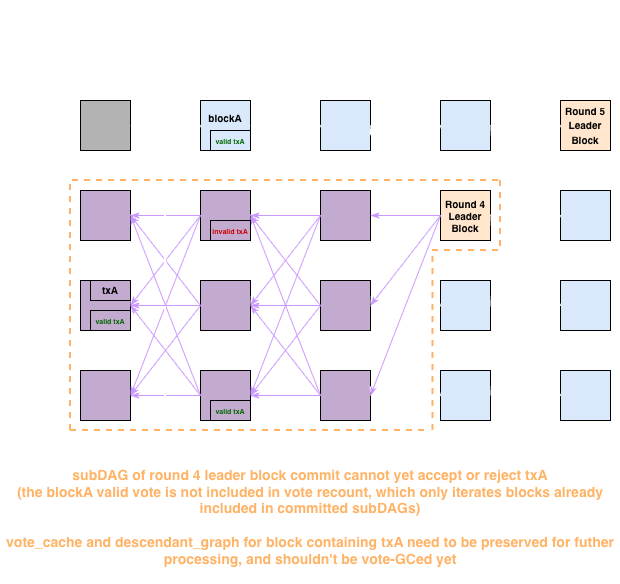

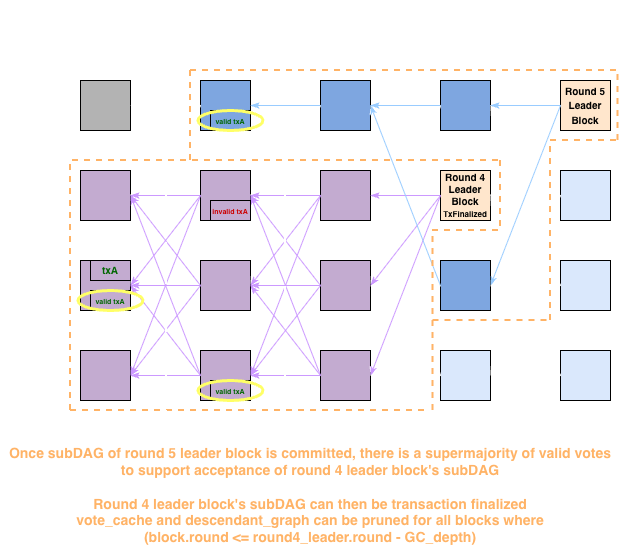

The visualization shows advancement of the

-

Committing a leader block itself is not sufficient to advance the

round. The voting information must be preserved to assist transaction finalization

-

Only after a leader block and its subDAG are transaction finalized, will the

round be updated

The design introduces a desync between DAG-GC and Vote-GC. Keep this in mind as it will become important in later discussions.

Also note that while valid votes are shown in the diagrams, they are in fact implicit and deducted from the absence of an explicit invalid vote.

Exploiting Sui's Mysticeti-FPC

In this section, we will analyze Mysticeti-FPC under an asynchronous network, since safety must hold regardless of synchrony assumptions.

Mysticeti-C GC's correctness can be trivially claimed. Does this also extend to Mysticeti-FPC?

The answer, unfortunately, is no.

To understand why, let's revisit some of the important design choices, starting with:

-

Blocks are assumed to inherit votes from self-ancestors. In other words, it is impossible to tell the transaction vote of a block without its full self-ancestry up to the voted block

This requirement of "full ancestry up to the voted block" should immediately raise concerns, since careless pruning can easily break this assumption. This is exactly where cracks begin to appear in Mysticeti-FPC’s garbage collection mechanism. The issue can be illustrated with the following example.

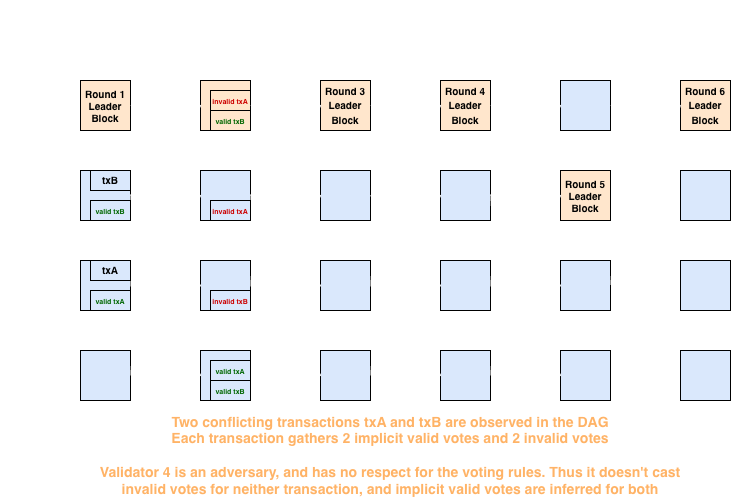

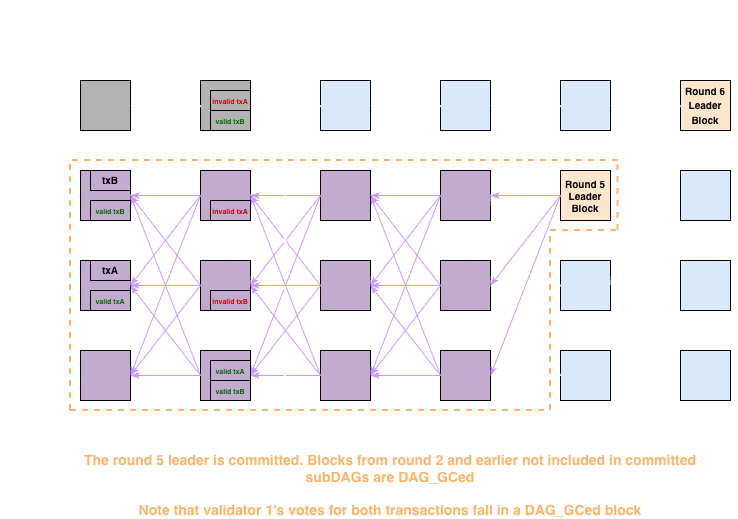

-

Assume the global DAG is in the state shown below. Notice the presence of two conflicting transactions,

txAandtxB, each receiving two implicit valid votes and two invalid votes. Under normal conditions, both transactions would eventually be rejected by the stale unfinalized transaction rule. This is because there are not enough invalid votes in the vote_cache to reject them directly, and there are also not enough implicit valid votes to accept either transaction during a vote recount.

-

Since none of the round 1 through round 4 leaders are committable, the first leader block to be committed is the round 5 leader block, resulting in the following state.

txAandtxBboth can't be transaction finalized yet. Theround is advanced after the round 5 leader block's commit.

-

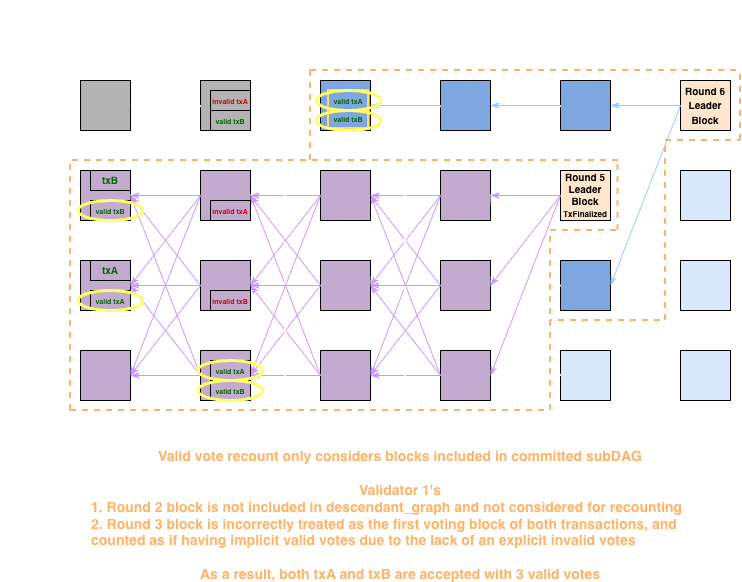

As the round 6 leader block gets committed, things get interesting.

- The first two blocks of validator 1 aren't included in the committed subDAG since they're already pruned. As a result, when populating

descendant_graphfor blocks in the round 6 leader block's subDAG, they also won't be included accept_recounttraversesdescendant_graphonly. By excluding validator 1's block with transaction votes fromdescendant_graph, the votes within it won't be counted- Consequently, validator 1's round 3 block will become the first validator 1 block to be traversed. Since there are no invalid votes within it, and valid votes are inferred from the lack of invalid votes,

accept_recountwill treat it as if the validator 1 cast valid votes for bothtxAandtxB - Both

txAandtxBgather 3 valid votes, and will be accepted

- The first two blocks of validator 1 aren't included in the committed subDAG since they're already pruned. As a result, when populating

In summary, premature DAG-GC makes vote recounting overly optimistic, which can cause invalid votes to be ignored and allow transactions that should be rejected to be accepted.

-

This is already a serious issue on its own, because it breaks Sui’s fundamental invariant that a single object must not be used more than once. But the problem doesn’t stop there.

-

The

vote_cachefor a given block is not guaranteed to be consistent across validators. It is populated pre-commit, and there is no guarantee that all honest validators will observe the exact same set of votes. More concretely, one validator may see a new block containing an invalid vote while other validators fail to receive that block for an extended period due to network delays.The implication may not be obvious at first, but a great way to think about it is: all consensus decisions must be globally agreed upon by honest validators. If any decision depends on local state that other validators may not share, there must be a strong justification for it.

Transaction finalization includes a

decide_with_cachestep. Without GC, we've proven both the following implementations are correct:- Rejecting transactions upon seeing a supermajority of invalid votes

- Accepting a transaction at commit if no invalid votes exist

However, once we consider the previous "over-optimistic vote counting", the proof no longer holds. Let's look at an example.

-

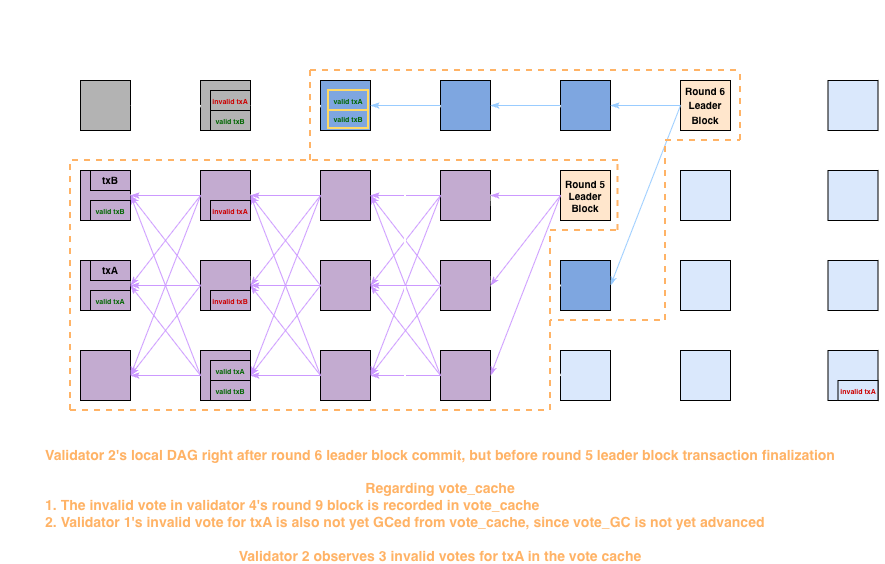

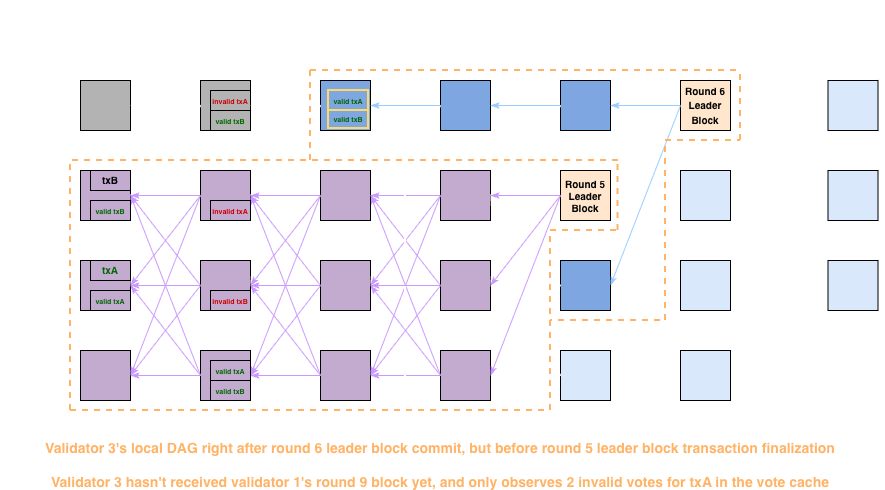

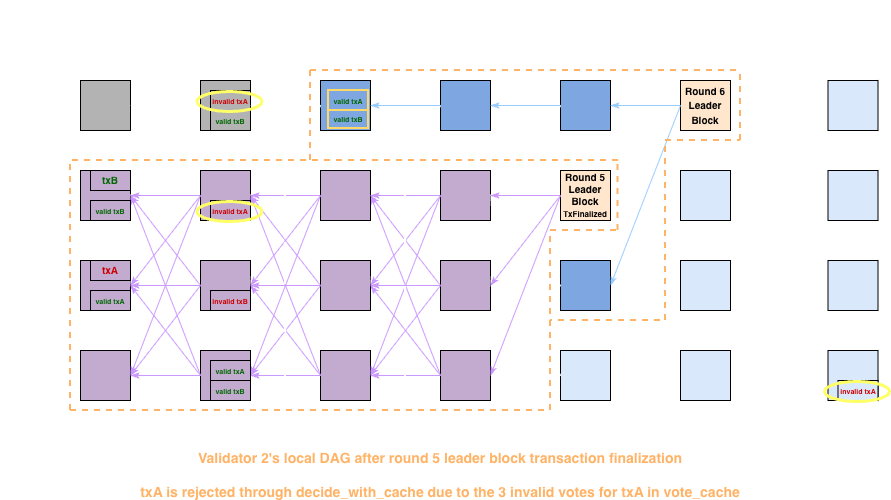

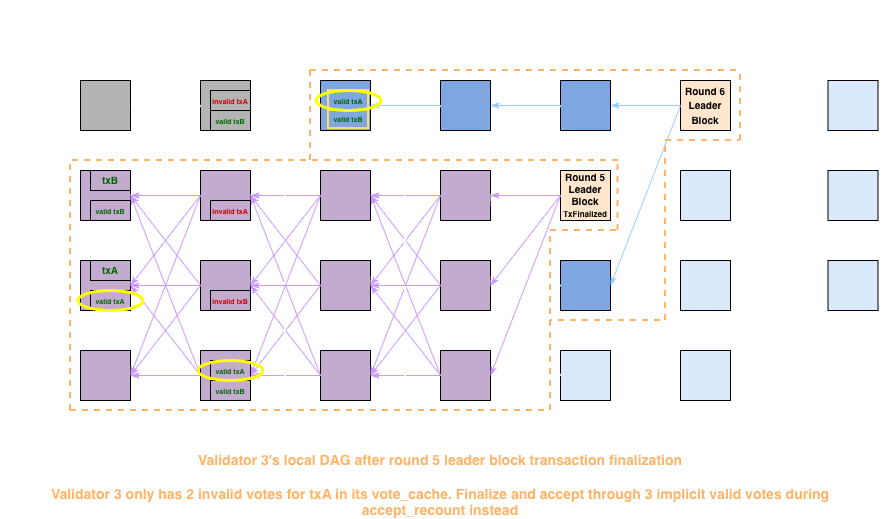

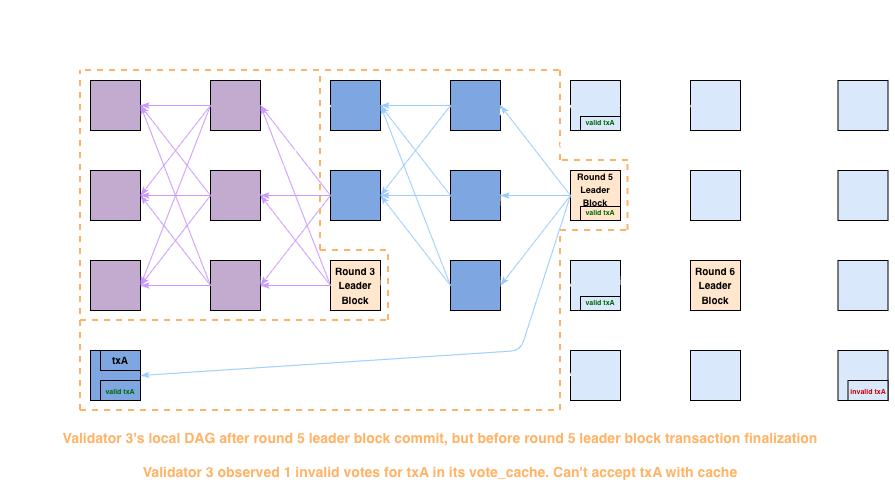

Shown here are validator 2 and 3's local DAGs upon committing the round 6 leader block. The DAG is largely the same as the previous example, except validator 2 now receives an additional round 9 block that includes an invalid vote for txA. Validator 3 hasn't received the block yet, demonstrating the temporal difference previously mentioned

- Validator 2's local DAG

- Validator 3's local DAG

There are a few important observations here worth pointing out:

- Before transaction finalization of the round 5 leader block's subDAG, the

round won't be advanced. As a result, even though validator 1's first few blocks are not included in the round 6 leader block's committed subDAG, the rejection votes still contribute toward vote_cache - Similarly, rejection votes from any not-yet-committed future block won't be included in

accept_recount, but they also contribute towardvote_cache

- Validator 2's local DAG

-

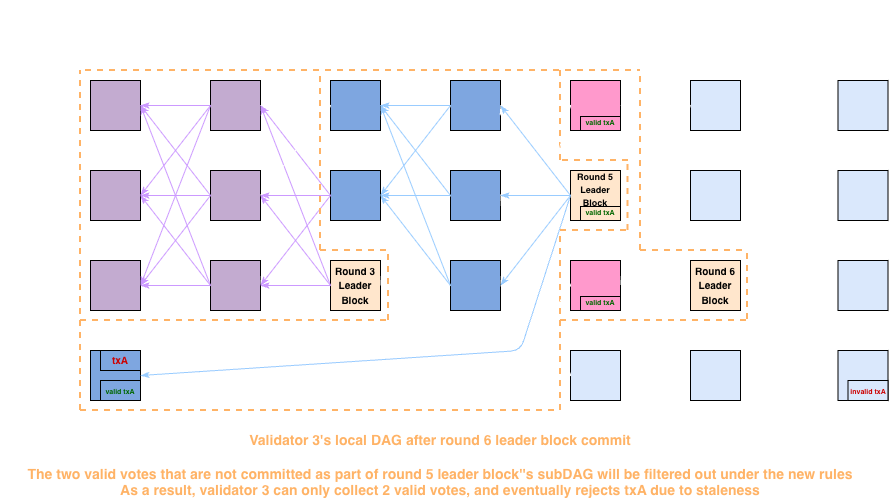

Upon attempting to transaction finalize the subDAG of the round 5 leader block, a problem immediately arises. Validator 2 can reject txA in the

decide_with_cachestep with its 3 invalid votes invote_cache, while validator 3 cannot. Furthermore, validator 3 has 3 valid votes due to optimistic recounting, and will accept txA-

Validator 2's local DAG

-

Validator 3's local DAG

-

At this point, it is evident that Mysticeti-FPC safety can be broken, with different validators reaching different decisions.

What exactly are the consequences of breaking the safety property? The most immediate impact is enabling double spending. Since different validators no longer agree on which transactions are executed, it is possible for a subset of validators to believe a coin is spent while the remaining validators believe it is not. This mismatch potentially allows attackers to spend a single coin multiple times.

Of course, there are quite some complications around how to weaponize such a primitive, including whether we're discussing purely on-chain activities or off-chain activities, whether off-chain components fetch data from full-nodes or trusted validators, as well as how adjacent mechanisms such as Sui's checkpoints affect attacks. None of these fundamentally prohibit attacks, but do make it more nuanced than it appears at first sight. To keep this post concise, we leave those topics for future discussions.

Analysis on the production parameters of

Patching Hiccups

How can the bug be patched? The most obvious way is to update DAG-GC to garbage collect after transaction finalization. This eliminates the gap between DAG-GC and Vote-GC. However, Sui's design puts transaction finalization and pre-commit Mysticeti-C logic (including DAG-GC) into separate tasks, making this fix non-trivial without taking some performance hits.

As a result, the initial patch took a different approach. Instead of adjusting when GC is done, the patch refines the vote counting mechanism to make it more conservative. On top of only counting the earliest vote of each validator, votes with the following property are also ignored:

The concept behind this is not straightforward, so let us explain. The problem with the original implementation is that there could be votes in GCed blocks that are missed, resulting in over-optimistic implicit valid vote counting. What if we try to avoid counting implicit valid votes when their self-ancestors might have been GCed? This would eliminate the problem, right?

The concept can be further dissected into 2 main ideas:

- If for some honest validator's block

, its self-ancestor contains a invalid vote that might have been GCed, the voted target block must satisfy . This is trivially true since honest validator's blocks only cast invalid votes for transactions within their causal history - If

is DAG-GCed by the time is committed as part of the subDAG of , it implies the previous commit must satisfy . This follows from the design of DAG-GC

Combining these two ideas and making it even slightly more restrictive, we get the rule implemented by the patch.

This successfully transforms the over-optimistic valid vote counting into a conservative one, is it sufficient to fix the bug?

Unfortunately, the answer is no. Recall that while proving mutual exclusivity of vote_cache acceptance and stale rejection, we relied on the observation that if a commit happens without any rejection votes, the certificate pattern serves as a supermajority of implicit valid votes. Under the conservative vote counting rule, this no longer holds.

With this safety property broken, attacks can be carried out as follows:

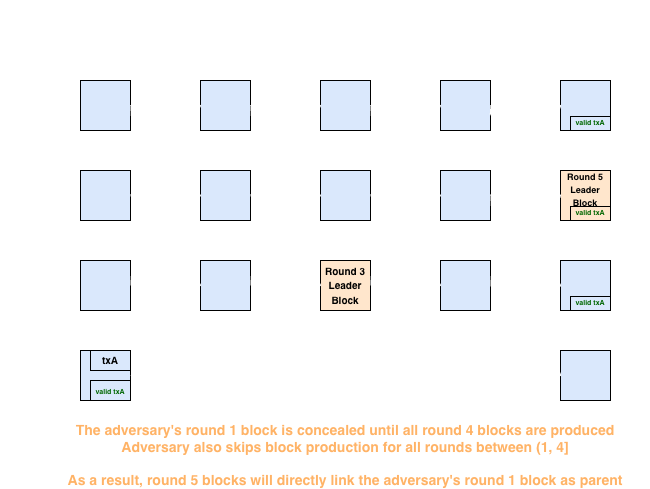

-

The adversary selects a round

of which it is the leader, and places txA in its round block . is however, concealed from all other validators at time of production. The adversary proceeds to skip block production for all rounds between . It then waits until all other validators produce their round blocks before broadcasting its . As a result, other validators will include as a parent of their round blocks

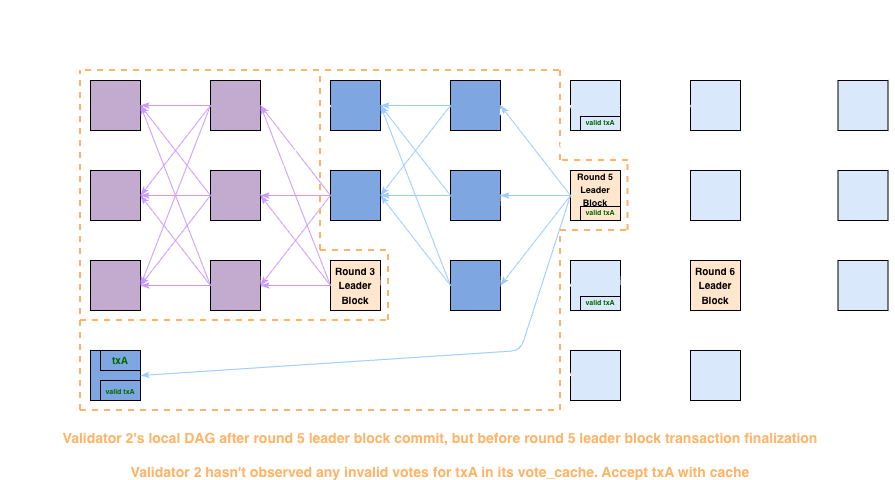

-

The adversary waits until right before the commit of the leader of round

(this happens at approximately round 8, since it takes around rounds to commit a leader block), and sends a block with an invalid vote for txA to victim validators. In this case, the victim is validator 3. -

Validators that haven't received the invalid vote will accept txA with the

vote_cache

-

Victim validators that received the invalid vote cannot accept txA with the

vote_cache

-

-

Victim validators that cannot finalize the transaction with cache will proceed to recount votes. By applying the new vote filtering rule, any votes for

will be ignored. Since most votes for txA will be committed by leaders from subDAGs where , their previous commit leader has . As a result, the votes will have , and become ignored. This makes it impossible to collect a supermajority of valid votes, and txA will be rejected

Apparently being over-conservative is also inappropriate. Validators still fail to agree on transaction finalization results, and safety failure remains possible.

Interestingly, not only does this patch fail to fix the bug, it unwittingly makes it easier to exploit. Attackers no longer have to rely on the 12 seconds asynchronous period required for votes to be DAG-GCed, and can instead carry out attacks simply by holding onto self-produced blocks and releasing them at the right moment to the right recipients.

The Followup Patch

To fix the issue introduced by the first patch, Sui added another constraint to transaction finalization. On a high level, the constraint can be understood as: instead of introducing more complexity to unify vote_cache acceptance and stale rejection, it is easier to skip vote_cache acceptance whenever the two finalization methods might disagree.

The most trivial implementation of the constraint is to get rid of vote_cache acceptance completely. By eliminating one of the two finalization methods, there would be no disagreements anymore. But doing so would likely introduce noticeable finalization latencies. Instead, Sui came up with something that is less intrusive, but achieves the same goal.

Observe that the only scenario where vote_cache acceptance and stale rejection may diverge is when implicit valid votes in a certificate pattern are ignored. As long as vote_cache acceptance is skipped when those votes are ignored, the disagreement would naturally disappear.

To achieve this, the patch gives the following constraint, where all vote_cache based acceptance.

Again, the constraint is not straightforward, so let's try to figure out how we ended up with it.

-

By substituting

with the certificate pattern voters of , we can write the constraint of the first patch as If we have the commits of all

, it will be trivial to check if any of them satisfy the constraint, and will have its implicit valid votes ignored. Unfortunately, we cannot afford to wait till being committed, since vote_cacheacceptance is calculated as soon asarrives -

While it is impossible to wait for commits of

, it is possible to anticipate when they might satisfy the constraint. A key observation is that by the Mysticeti-C commit property, a certificate pattern of committed must be contained within the causal history of committed if In other words, the entirety of

must be contained within causal history of the first commit where It immediately follows that

And consequently

Combining the two sub-constraints, it is now apparent any ignored implicit valid votes within

With this, Sui's Mysticeti-FPC implementation is finally made secure.

Final Thoughts

Once again, we see how a well-established garbage collection mechanism can easily become dangerous with even the slightest modification of the protocol, and how the most subtle implementation detail can end up undermining the security of the entire protocol. This highlights the delicacy of complex algorithms, as well as the difficulty of getting them right, even for some of the best researchers in the world.

Aside from this bug itself, a few notable episodes occurred during the patching process. Let's take a look at the timeline:

- 2025/10/30: We reported the safety bug to Sui

- 2025/12/06: The first patch was merged into the main branch, and made public to everyone

- 2025/12/09: We analyzed the merged patch, discovered the second bug, and notified Sui once again

- 2025/12/14: Sui experienced degraded consensus, allegedly due to a DDoS attack. This had us on the edge of our seats, fearing it was setting up asynchrony conditions to facilitate an exploit against Mysticeti-FPC. Fortunately, our monitoring of the chain spotted no successful attempts. Combined with the fact that Solana also experienced a DDoS at around the same time, we eventually decided it must be something unrelated to the consensus bug

- 2025/12/17: The first patch was included in a mainnet release

- 2026/01/11: The second patch was merged into the main branch, which once again, made it available to the public

- 2026/01/14: Sui suffered from an outage due to the bug in the first patch

- 2026/01/14: The second patch was included in an emergency mainnet release to address the outage

What do all these events entail? More than anything, they illustrate how fragile correctness can be in complex, highly optimized systems like modern consensus protocols.

What started as a relatively contained safety issue quickly evolved into a chain of subtle interactions: a fix introducing new edge cases, those edge cases surviving initial review, and eventually manifesting as a real-world outage. None of the individual steps were unreasonable in isolation, but together they highlight how difficult it is to reason about these systems end-to-end.

One key takeaway is that protocol correctness does not compose easily with optimizations. Mechanisms like garbage collection, caching, or execution shortcuts are often introduced to improve performance, but they implicitly rely on invariants that may no longer hold once the system evolves. When those assumptions are violated, even the slightest, the resulting behavior can diverge in ways that are hard to predict and even harder to detect through standard testing.

Another important lesson is that patches themselves must be treated as high-risk changes, especially in consensus-critical paths. Fixing a bug in such systems is not just about addressing the immediate issue, but also about ensuring that no new inconsistencies are introduced across different components or phases of the protocol.

Ultimately, this incident reinforces a broader point: the security of these systems is not determined solely by their high-level design, but by the correctness of every layer from specification, to implementation, to optimization. And as systems grow more complex, the gaps between these layers become where the most interesting (and dangerous) bugs tend to live.

If you've made it this far, you probably share our passion for digging into the guts of consensus algorithms and finding where theory meets (or breaks against) reality. This is what we do at Anatomist Security—deep infrastructure audits that go beyond surface-level code review to stress-test the assumptions underlying blockchain protocols.

Have a consensus mechanism, VM, or core protocol that needs a thorough examination? Reach out at @th3anatomist on X or Telegram. We'd love to take a look.